You’ve probably heard the hype:

IoT is the next frontier in the information revolution that promises to make all our lives easier…

And that’s doubly true for hackers.

In this episode, I’m joined by Joe Grand, also known as Kingpin, a computer engineer, hardware hacker, product designer, teacher, advisor, daddy, honorary doctor, TV host, member of legendary hacker group L0pht Heavy Industries, proprietor of Grand Idea Studio (www.grandideastudio.com), and partner in offspec.io, a cryptocurrency wallet recovery service. He has been creating, exploring, and manipulating electronic systems since the 1980s and is here to take a look at the vulnerabilities hackers exploit in IoT (and how you can defend against them).

Join us as we discuss:

- Why, despite what many believe, hardware is no less vulnerable than software

- The common vulnerabilities in IoT devices and what you can do about them

- How security standards factor into IoT security

To hear this episode, and many more like it, you can subscribe to The Virtual CISO Podcast here.

If you don’t use Apple Podcasts, you can find all our episodes here.

Listening on a desktop & can’t see the links? Just search for The Virtual CISO Podcast in your favorite podcast player.

Time-Stamped Transcript

This transcript was generated primarily by an automated voice recognition tool. Although the accuracy of the tool is 99% effective you may find some small discrepancies between the written content and the native audio file.

Narrator (Intro/Outro) (00:06):

You are listening to the Virtual CISO Podcast, a Frank discussion, providing the best information, security advice, and insights for security, IT and business leaders. If you’re looking for no BS answers to your biggest security questions or simply want to informed and proactive, welcome to the show.

John Verry (00:25):

Hey there and welcome to yet another episode of the Virtual CISO Podcast. With you as always, your host John Verry. And with me today, someone I’m excited to talk to, Joe Grand. Hey Joe.

Joe Grand (00:35):

Hey, how’s it going?

John Verry (00:37):

Good, man. I’m looking forward to our conversation. I hope you’re as good during this conversation as you was during our introductory call, because I left that meeting going like, “Wow, this is going to be fun.”

Joe Grand (00:47):

Well, hopefully this is just as good. I’m caffeinated and I’m ready to go.

John Verry (00:52):

Something tells me you don’t need caffeine, Joe.

Joe Grand (00:55):

No, I don’t need too much of it.

John Verry (00:57):

Exactly. Let’s start simple. Tell us a little bit about who you are and what is it that you do every day?

Joe Grand (01:03):

Sure. My name is Joe Grand. Sometimes people know me as kingpin, which is my old hacker name. I currently am straddling the fence between hardware hacking and product designing or engineering. I’m formally trained as a computer engineer, but I grew up in the hacker world and mostly what I do nowadays is teach hardware hacking to various organizations. Basically helping people understand it. If they encounter a piece of hardware, how to take it apart, figure out how it works. If there’s any security mechanisms, things that they can do to defeat the security. And then they’re either going to be from the offensive side of trying to do this, say for a pen test or analysis against an adversary or whatever, or from the defensive side to think about how attackers might attack their own product. And then they say, okay, if we know these things, now we can go ahead and try to make those more secure.

John Verry (01:54):

Yeah. Before we get to the real business of the conversation, what’s your drink of choice?

Joe Grand (02:01):

Well, I live in Portland, Oregon, so I would be remis if I didn’t choose either coffee, beer or kombucha.

John Verry (02:09):

I thought you were going to go Rogue’s Dead Guy, right?

Joe Grand (02:12):

No, I’m a kombucha guy and just love various types of whatever I get my hands on.

John Verry (02:19):

GT Daves.

Joe Grand (02:20):

GT Daves is great. There’s a lot of good local small kind of micro brew, local Kombucha places around here, too. And I’ve been tempted to make my own, but there’s something about growing bacteria, even if it’s good bacteria, still freaks me out. I think I’ll just let the professional do it.

John Verry (02:38):

Yeah, exactly. Are you a guy that believes in apple cider vinegar and the mother. I mean that’s kombucha, right?

Joe Grand (02:46):

It is. I have some apple cider vinegar in my fridge right now making some apple shrub and not quite kombucha, but still a nice little drink.

John Verry (02:56):

Apple cider vinegar makes a fantastic pickled onions, the best pickled onions I’ve ever had. Take just a red onion. And I forget you add a little bit of water, and I don’t remember exactly how much and maybe, but to the apple side of vinegar.

Joe Grand (03:11):

Interesting.

John Verry (03:12):

And you get a good brags or somebody like that that’s really got the mother going. Absolutely. I’ve bought pickled onions and yo can make far better pickled onions and pickled onions liven everything up. You can put them on a sandwich, you can put them on a salad.

Joe Grand (03:23):

Well, pickled anything livens anything up. That’s the other thing here. Actually, I started pickling when we moved to Oregon.

John Verry (03:30):

Did you?

Joe Grand (03:31):

Five years ago, I started pickling and there’s a Fortlandia episode about, we can pickle that and it’s true. It’s like, can I throw in there.

John Verry (03:39):

There’s Troegs Brewery is one of my favorite places. And Troegs, they serve, and they’re not that good, I don’t think, but it was fun anyway. Pickled fruit like pickled strawberries. Definitely a very interesting flavor, but really cool. One of those things that you see in the menu and you’re like, “I got to try that.”

Joe Grand (03:55):

Right. That’s how they get you.

John Verry (03:56):

Exactly. All right. Your intro was perfect. Really what I wanted to chat with you today is something which I think we should all be more cognizant and aware of and perhaps cautious of. This IoT. Let’s talk about, you see numbers and estimates that there may be as many as 75 billion, IoT devices deployed by 2025. That’s roughly 10 for every human being on the face of the earth.

John Verry (04:27):

And we’re well along the way because we have clients that are deploying tens of thousands of devices in smart buildings and things of that nature. We’re definitely on our way there. But what I find remarkable is when we’re chatting with the IoT device manufactures or we’re chatting with their management team CISOs and product management folks is how unaware they seem to be of the risk that these pose and their responsibility to manage that risk. The goal for today’s conversation is to talk about the fundamentals of what the IoT is, how these devices pose risk. If I’m a design engineer, how can I help manage that risk. If I am a product manager, how can I make sure my design engineers are managing that risk? And then I think at the end, we can touch on the idea of, if I’m someone who’s consuming, I’m putting these devices out, I’m using them as part of my organization, how do I also manage that risk or how do I know that my IoT device manufacturers managing that risk on my behalf. Cool.

Joe Grand (05:29):

Yeah. I mean, it’s just a crazy world that we live in. I mean, we are surrounded by technology and it’s something where growing up in this world of seeing technology become more and more provasive, I call myself a technology minimalist. I try not to employ a lot of these IoT devices.

John Verry (05:47):

That’s comical. That is comical. The guy who is telling people how to design them is not deploying them.

Joe Grand (05:58):

Because I know the kind of risks and the dangers and to me, those risks aren’t worth the benefit. But the problem is I can make that choice in my house. I can’t make that choice if I work in an office environment or if there’s an infrastructure that’s being employed that I have to be a part of. Just the IoT landscape, if you will, the number of devices that are out there is mind blowing, like you mentioned, and people tend to trust hardware more so than software, but people just tend to trust devices. Like when you go buy a device, it says implement security, blah, blah, blah. You plug it in and you use it. Not a lot of people really think about, well, is it actually secure? Are there design problems?

Joe Grand (06:42):

Were there back doors that an engineer accidentally left in there? Does the vendor think they are actually secure, but they’re not. Are they basing their design on some reference design that lots of other vendors use that’s vulnerable or they using some open unlocked down version of Linux, for example. And there’s all these different things. And it’s a hard challenge. And it really depends on who you are, whether you’re a designer or a vendor or an implementer of these things, of how you need to approach the problem in order to try to be more secure.

John Verry (07:14):

Good. That’s exactly what I’d like to do. I know this sounds stupid, but I actually have asked those questions to the guys that wrote the OWASP by SVS. So that’s the open web application security product, internet security verification standard. By the way, the same guy who was the head of that effort is the head of the CSA, Cloud Security Alliances, their IoT standard. And I asked this question and it’s funny because you get a dumb look for a second, because you realize that they is it. Let’s start easy or maybe not easy. Deceptively challenging question. What is an IoT device?

Joe Grand (07:47):

That’s interesting. IoT, internet of things, my knee jerk reaction to that and I’m going to have two answers. My knee jerk reaction is any sort of resource constrained device that’s connected to the internet. We think about servers and computers and laptops and phones. Those are sort of high resource, I would say very powerful computers. And then when I think of IoT, it’s everything else. It’s the sensors. It’s the cameras, it’s the home automation. It’s the infrastructure, all these other things that are connected to the internet.

John Verry (08:18):

Smart refrigerators, smart cars, all of that [inaudible 00:08:20].

Joe Grand (08:20):

Things that more have a specialized purpose that are connected to the internet. But now I would say because of the integration of not only sensors and all of this stuff and the computational power of these devices are not resource constrained anymore. That IoT is just the internet. It’s just more stuff connected to the internet and everything that’s connected to the internet becomes a possible entry point of someone who wants to attack it.

John Verry (08:49):

Exactly. And it’s funny because it struck me when I saw that the there’s an entity called ioXt Alliance. So they’re one of these entities that’s promulgating a standard and Google had actually their pixel phones certified as being ioXt compliance. Google called a Google pixel phone an IoT device. Which I was just head explodes and it’s like, wait a second. Are we basically saying every computer or every computing device that has access. And then if you look at California SB 327, it defines that laws applying to anything which talks off of the network that it’s on, which is also really interesting because if you’re on a network that’s nested inside of a network, theoretically, it’s not connected to the internet, but that still becomes an IoT device subject to SB 327.

Joe Grand (09:40):

Sure. Or even something like a mesh wireless network of sensors in a building that are all communicating. And then that data goes off.

John Verry (09:47):

Exactly.

Joe Grand (09:48):

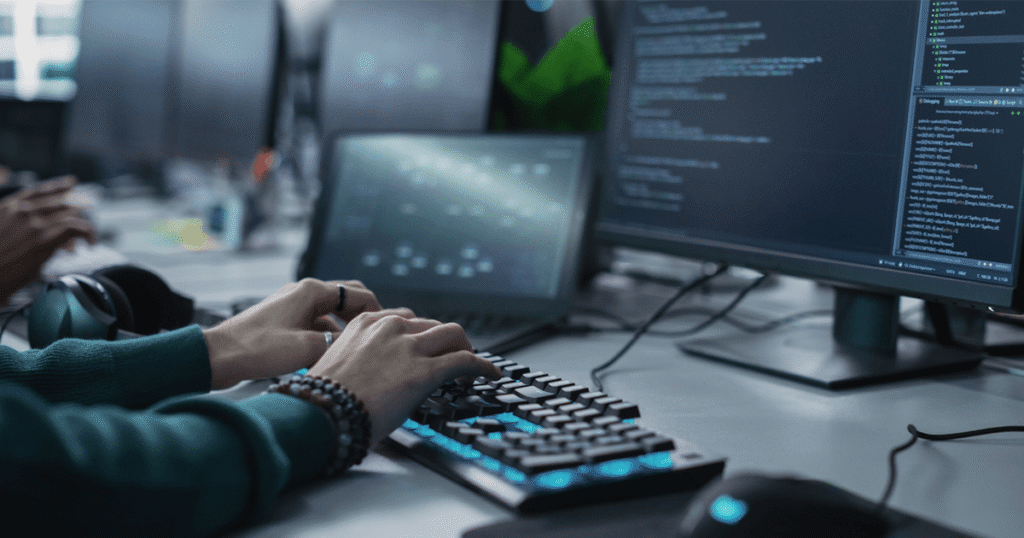

I mean, everything is connected. And really with hardware hacking, or I would say hacking in general, every way in is like a stepping stone to something else. As a hardware hacker, I’m looking at the hardware related devices that are connected to this internet of things or to the internet where so many of these devices are extremely insecure. And part of that is because of the low cost sort of commodity miss of it, of hardware devices. You know they have to be cheap and easy to set up and aim to the consumer. And designing secure products is hard. It generally will require not only more engineering, but more computation or more chip specific to security and those cost more money and they’re harder to work with and they’re not as available.

Joe Grand (10:35):

So we basically see a bunch of general purpose computers without a lot of security, all attached to the internet. And those are the things that we would go after. I wouldn’t go after somebody’s PC, I could, and target them through phishing or whatever else. But hitting IoT devices that are connected to somebody’s network is the easiest way in. Default passwords on a camera. Or you go to Shodan, which is basically a Google search engine for all sorts of crazy embedded devices connected to the internet. And you’ll see a lot of times things that don’t even have passwords that will drop you right in. It’s like the bulletin board systems and using modems back in the early and mid eighties where you would just connect to a computer and it would just let you in, or you’d have some environmental control system or an elevator control system or whatever just at your disposal. And it’s kind of where we are. It’s just slightly higher tech, but it’s still pretty much wide open.

John Verry (11:30):

I mean, it’s funny that you should mention Shodan. So the first time when we first got into IoT device testing and I was learning about Shodan. I went on to Shodan and in short order, I was sitting on the controls of an offshore wind farm. And literally those big, giant-

Joe Grand (11:50):

This is in 2021 or something [crosstalk 00:11:52].

John Verry (11:52):

This is probably about a year and a half ago, two years ago, something of that nature. And I was just like, “Holy.” Let’s drill this down a little bit. First off, let’s just kind of set a framework for people. IoT devices are only part of what I tend to refer to as an IoT ecosystem. Just to paint a picture for somebody, that this device is not autonomous. In order for the device to do what it’s doing in order for it to add to your shopping list, because you tell Alexa and I don’t have one here that’s going to beep up when I say that, or is going to trigger the sprinkler to turn on in a farm because the level of moisture on the farm is getting to a level where there’s watering. Talk about what are all the different pieces that communication takes place and what else is part of this ecosystem to make that device worthwhile?

Joe Grand (12:46):

Yeah. So you’re right. Every device or IoT device or thing or hardware, generally is going to connect to some backend ecosystem or something. Even in the simplest perspective, think about a webcam that you have in your house, that’s connected to the network and then there’s going to be some web app or some backend server that’s capturing the data and letting you access the camera. You’re generally going to devices and then either some server or some piece of software also accessible in the network that will let you communicate with the device. It’s not like you just have a single device to contend with, but you also have say, if somebody’s hacking, say a wireless doorbell. You have your doorbell and then you have the app. Most people are going to hack the app that’s communicating with the doorbell because there’s tend to be more software kind of focused hackers anyway, but that interface is easy. You can reverse engineer that program, see if there’s any sort of communication vulnerabilities or if the credentials are transmitted in the clear or whatever it is.

Joe Grand (13:54):

Even if you just have a hardware device, you don’t just have a hardware device, you have your hardware device and everything around it. And again, each of those pieces is potentially vulnerable where a lot of times people go, oh, well, if you get physical access to a piece of hardware, then games over, which generally is true. Usually, if somebody gets physical access, they will eventually be able to figure out a way to defeat the security of it. But when you have all these network connected devices and the apps controlling the thing, you might not need the thing. Now you have the app and you can hack that app or do some proxy and change communication as it’s going back and forth between the app or the server and the device. And now you can start compromising devices without having physical access to the device, depending on how it’s designed.

John Verry (14:39):

Right. And one of the advantages that the device has generally speaking is it usually has physical security. It’s inside of your house. It’s inside of a server room, it’s inside of a building. The access to the device in theory, in most instances has some higher degree of physical security. Real quick, just make sure paint a picture for folks. We’ve got the device, we’ve got a way we talk to the device. Maybe it serves a little web application that you can use to configure it. I think about your home router. You type in the IP address, you type in admin password, and now you’re configuring your router. So that you’ve got software running on the device. You might have a mobile app. It might control your mobile app, like your nest thermostat or your doorbell.

John Verry (15:20):

It communicates across our network to something on the internet, a web application, think of it as a set of APIs or something of that nature. And then of course that you’ve got infrastructure that’s hosting those applications. In order for an IoT device to be secure, the IoT ecosystem, all of those components need to be relatively secure. I think if people have that picture in their head as we’re talking, it kind of helps them understand, we’re going to drill down on the device, but there’s a lot of other stuff that has to work right as well.

Joe Grand (15:51):

Yeah. That’s what makes security so tricky anyway, regardless of what aspect you’re working on is, as designers, we can try to anticipate what attackers are going to do, but we can’t anticipate everything. And attackers are always going to have the advantage because they can go after any part of the system that somebody may have made a mistake on. And designing secure hardware is hard. Designing secure web apps is hard, designing secure internet communication that can’t be compromised, all these different things, it’s very, very hard to design those systems and that’s exactly what attackers are taking advantage of.

John Verry (16:25):

Yeah. I agree. Let’s go device up. Let’s say I’m a product engineer. Let’s say I’m at an IOT device manufacturer, maybe I’m in charge of a product management. What do I need to be aware of when I’m ensuring a device is secure? Let’s kind of walk through that process.

Joe Grand (16:45):

From a product level, board level, there are a lot of things to worry about. And basically the key thing is anything that a design engineer is using on the board to make it easier for them to design or for them to test or to manufacture or to repair, all of those features can end up being useful from an attack perspective as well. A lot of the hardware hacking that is successful is taking advantage of things, say like test points, which are little connections on a circuit board to make it easier for usually test equipment to take measurements of critical signals. Anytime an engineer puts a test point on the board means that that’s a signal that the engineer needs to get to for some reason. That’s what I’m going to go after is any signal that has that.

John Verry (17:28):

And real quick that test port, is commonly referred as debug points and that would be things like SWD or JTAG or UART. That’s what you’re referring to when you refer to these test points?

Joe Grand (17:41):

Yes and no. This is even at a lower level. It could be individual signals, but then if you go up a level, then you maybe have test points in a grouping, or you might have a connector that you see the footprint for the shape of the connector on the board, but not the actual connector there. And that’s more likely to be something like a debug interface. Which I consider like the root access of hardware, like a debug interface will let you, if you have access to it, generally you can read memory and write to memory and single step through code and change parameters and change registers everything like you would do with a software application but now you have physical access on the board.

Joe Grand (18:20):

And the challenge from a design perspective is things like debug interfaces and test points are so convenient during the manufacturing process that engineers use them, manufacturers when they’re building the products and they’re programming them and testing them, use those interfaces and getting rid of them after the fact is tricky.

Joe Grand (18:38):

And if they’re on the board or even on the chip and not brought out to the board, somebody’s going to find them. It’s these things that are convenient that we can look at. Also, another big thing is we think about monitoring network traffic using Wireshark to look at wifi traffic or ethernet, whatever to see what data’s coming across. And before everybody started using HTTPS, you could see a lot of stuff in the clear. When you’re dealing with most embedded systems, electronic sytems, IoT devices, almost always the communication that happens between the chips. So say between the CPU or the microprocessor and external memory or some other external peripheral chip of some sort, whatever it is, is generally going to be in the clear. So we can use engineering tools to tap down onto those lines, just like using Wireshark, except now we’re looking at ones and zeros transmitting between two chips on a board, figure out what that data is.

Joe Grand (19:37):

And then either use it as is, or modify it in some way, spoof it, do whatever we need to do. And that’s a problem and a challenge because most of the peripheral chips that engineers are using don’t support encryption on the fly.

John Verry (19:50):

Rights. That’s interesting to say that, because we’ve seen some people, it seems to be fairly common that the people that are aware that these debug interfaces might be risky, often the pins are gone. But I’ll see our guys actually sold new pins back on or I’ve seen our guys, they’ll build the board in such a way they’ll be able to decompile the board. We’ll take the chips off the board, put them onto some type like a breadboard kind of equivalent so that way we can sniff the trap and make it easier to sniff.

John Verry (20:23):

I mean, even people that are somewhat aware of issues are not … I mean, if the idea, if they took a hardware hacking class like you teach, one of the advantages would be they would see what bad people like you, not you, the bad people are doing and bad people like us that are being paid to be bad people, to test these devices, they would see what we do and that would make them more cognizant the fact, okay, I have to account for that as I’m actually architecting and designing the product.

Joe Grand (20:48):

That’s right. And the challenge though, is that a lot of the chips that we need to use just because they don’t support encryption and they’re kind of using these legacy inner chip communication protocols, things like i2T or SPI that don’t have support for that. A lot of times you’re just stuck with what you get. If you’re creating your own custom systems, then you can say, all right, we need this external peripheral. Let’s see if we can build some sort of wrapper around it or have a custom FPGA or some other type of chip that will encrypt the data on top of that protocol, but that ends up being a lot harder. Most-

John Verry (21:24):

And more expensive. And when you’re producing devices that you’re trying to sell tens of thousands or hundreds of thousands of, a dollar per device becomes a lot of money. If it’s let alone five or $10 per device.

Joe Grand (21:36):

That’s the thing. It’s security versus convenience versus cost versus all the other constraints that engineers have. And a lot of times security ends up just falling by the wayside also because not all engineer are focused on getting secure products. They’re focused on getting the system to work in the first place and then maybe they’re like, well, if there’s time, we can try to add security later, but that never works.

John Verry (21:57):

Time to market is always a problem. Quick question for you though. We started with already having physical access at the board level. Being cognizant and eliminating as much of that risk as we can, we can’t eliminate all that risk, let’s say in the design of a product, but we can actually minimize the chance that somebody gains that exposure either through the use case or through good physical packaging. Let’s talk about that level. Because sometimes we see packaging, like we see tamperproof packaging. We see packaging in such a way that destructing the packaging destructs the operation of the device which another way do it. And then we see really bad packaging, which is incredibly easy to open and access or we even see things like debug ports being exposed intentionally, externally, out through the packaging. Talk a little bit about that packaging layer. We know we want to keep people from getting down at the board itself, what can we do to prevent that?

Joe Grand (22:59):

Right. And that’s a hard question because this also it costs money and it also depends on the threat or what you’re trying to protect. What comes to mind is a hardware wallet, cryptographic hardware wallet to store your cryptocurrency, which recently I just broke one that had $2 million on it. And eventually we’ll go public with some of that information, but that was advertised to have an anti-tamper mechanism or a physical security mechanism, which was an ultrasonically welded case. So you basically have the two pieces of plastic and they’re vibrated together to create what looks like a one piece housing. And if you open that, that’s going to leave visual, obvious indication that it’s been open, which may be a good threat, might be a good piece of security if it’s a device in an insulation that is constantly being checked as being tampered with.

Joe Grand (23:58):

But in my case, I was here in my lab back here and I could cut an open and nobody’s checking to say, wait a second, you’ve just open this device. Its anti-tamper, didn’t really prevent me the attacker from getting to the circuitry. Just because you have anti-tamper doesn’t mean it’s going to prevent against everything. Some of the most common stuff we see and all of it, I would say is not really security through OB security, but it’s security mechanisms that it slows down the attack. It slows down the process to get to what you need. And it sometimes though gives people a false sense of security because they say, oh, we have anti-tamper. We have epoxy covering the components to make it really hard to get to, but those are not true security features.

Joe Grand (24:42):

They are just some physical prevention. And I think about these anti-tamper mechanisms, which I’ll give you a couple examples of in a second, which is basically physical security for electronics to prevent somebody from opening up the devices. They’re exactly like a home alarm system. So you have your home alarm, you have your window sensors, you have your wireless motion sensors. And that comprises your whole security system. With anti tampers systems on a board you see that also. You might see sensors that detect when the device is open. You might see a switch there that gets depressed when the cover gets open. You might see a light sensor that says, wait a second, I see light and I shouldn’t. You’ll see epoxy covering over and that’s going to give you really hard coding either on top of the individual chips or potted in the whole area.

Joe Grand (25:30):

But once you know what those are, you can start to defeat them. Just like if I was going to break into a house and I knew that there was a window sensors on the first floor, but nothing on the second floor, then maybe I would go in the second floor. But if there was a motion sensor on the second floor, then I’d have to say, okay, how do I defeat that? Once you know it’s there, you can start to put together an idea about how to defeat it. And generally all of those things can be defeated. So I wouldn’t rely on them, but they maybe are a good step depending on what your real worry is.

John Verry (26:00):

Yeah. I think that’s the key thing there. You have to take a risk based approach. Well, you want to make the fence high enough that the malicious individual, it’s not worth them taking the time to scale it. Either the return on investment. You have a crypto wallet that contains $2 billion, I’m going to be merciless in my pursuit of getting into that device. If I have the ability to screw with the farmer next door, and screw up the watering of a half acre of lettuce, I’m really not going to invest that much time in it. I do think you have to be very cognizant of the risk and what you’re protecting against. And I think in some cases, some of these barriers that are not definitive, but slow it down to a point where the return on invests is a good strategy.

Joe Grand (26:54):

If they’re implemented properly. I’ve seen devices that are protecting chips, but then right next to it, they have and open footprint that isn’t protected that connects on the same bus.

John Verry (27:04):

Exactly.

Joe Grand (27:05):

Unless you’re thinking about the entire attack process, just spotting some epoxy on something or implementing a switch, isn’t really going to stop anybody. I mean, security all comes down to, we hear this all the time of like, it’s all about just making the attack sufficiently hard or time consuming or expensive where it’s not worth the attacker to get access to.

John Verry (27:27):

Right. You don’t want a $500 corral for a $5 million horse, and you don’t want a $5 million Corral for a $500 horse. Right size the security depending upon the device.

Joe Grand (27:42):

And with IoT devices though, it’s like a lot of times you might only need physical access say to one device and hack it for fairly cheap. But now you have some piece of information that you can use as that stepping stone into a larger network that then makes that effort worthwhile. There’s really the whole kind of, I guess you would just call it risk management or what’s the key word everybody’s using? Threat modeling. You have to threat model as an engineer. The problem though is that, and I know this as an engineer and sitting in on security meetings trying to communicate this stuff to higher level management is security, again, it’s difficult, but it’s something that unless the people you’re trying to convince understand the problems, it makes it really hard.

Joe Grand (28:33):

You need to not only have your engineers understanding security, which are probably the most likely because they’re on the ground working on stuff, staying up to date with chips and technologies, and then you have your management, maybe that isn’t fully up to date, but they need to understand what the risks are. Engineers can go to them and say, “Hey, I have this idea to have something more secure. It’s going to prevent this attack. Can we do this?” And if they don’t understand that, they’re going to say no. Everybody up the chain needs to really understand the entire landscape of their devices, either from the design perspective or what they’re implementing in their own environment from other people.

John Verry (29:08):

Yeah. And we see the same challenge of different parts of the organization communicating risk using the same impact criteria and using things that a business can understand. Because we did some testing recently for an organization that does, I’ll loosely use the term building control. It’s vague enough to … But their organization gets it. They understand that there are tens of thousands of these devices and the implication to one of these devices being compromised is so significant that they were able to get management support. But the reason they were able to is that the CISO is a very talented individual who’s effective at communicating to management. You do need that. Let’s talk about this. We talked about, if somebody gets inside your device, that’s a really hard thing to, to protect against.

John Verry (30:01):

We talked about, if someone gets physical access to the device, that’s also hard, but there’s some more things we can do. Many IoT devices, getting physical access to device is limited. We’ve got clients that put things sensors in data centers or digital light and management systems that are being secured in a room inside of the building or something of that nature. Let’s talk about if I can’t get access to the device, I’m not secure. What is it? If I can’t get physical access to the device, how am I going to compromise it?

Joe Grand (30:37):

Even before we get into that, as far as physical access, you might have an infrastructure like you mentioned that people can’t access that specific equipment, but if I’m going after say a industrial control system or something, and I know that this vendor or this company uses this type of technology, I will go find one. I might not have physical access to that exact one used in the building but I’ll find one that I can use as what I would say, a reference or a model to understand and reverse engineer. And then maybe I can find some remotely accessible vulnerability from this thing that applies to all devices. And that’s when you get into the next level. The physical access of the deployed device might not be possible. One example is I’d hack to the San Francisco smart parking meters back in 2009.

Joe Grand (31:25):

And that was a smart card based system. And I couldn’t just go chop a parking meter off the street and bring it back to my lab. But what I could do is search on eBay and I found some decommissioned parking meters that were same vendor, slightly different model, but it gave me enough. I bought it. I could take it apart in my lab, understand where the communication mechanisms were to access the system, the general design of the system. And then I could use that information to help create my attack, which I would then go try on the actual, real parking meter. Same thing here, even if I don’t have physical access, I’ll try to find a design that I can use and hope that the vendor who created the product or maybe there’s some misimplementation or by default, there’s a default password or incorrect network configuration or something.

Joe Grand (32:13):

Then once I know that, then I can target your particular infrastructure that uses that same device. And that’s where you don’t need physical access anymore. Now we go through the network or we try to find some at ADA band management interface or something. Because when we think about, not only from the physical level of design testing, manufacturing, where you need to have access to a device, once a device is in the field, you’re still going to want to have some sort of maintenance access or administration access, configuration access, maybe that’s physical, but a lot of times these days it’s going to be remote. If we can find those interfaces, maybe they’re documented, probably not. We can find those and then maybe start attacking the device that way. Remotely over the network.

John Verry (32:58):

Right. And just to be clear, and very often with different devices, there are many actual networks. So it might be conventional ethernet. It’s often conventional wireless, local area networking. It can be backnet, it can be Zigbee, it can be BLE. Or it can be conventional using any one of those to talk to a web-based app sitting on the device in and of itself. You have to be cognizant of the fact that every network, I think that’s a good term, every communications interface. Because there are some even custom communications interface. We’ve seen some vendors write their own communications interface so that there’s networks within network that’s talk to each other.

Joe Grand (33:41):

We’re using, it’s an 802.15.4. Some RF or generic RF system. And then they’re implementing their own on top. I call all of those external interfaces. On a circuit board, I call them internal interfaces because they’re all the interfaces that are communicating on the board, the test points debug, and then external is anything that communicates to the outside world or that’s accessible to the outside world. And that doesn’t mean it’s accessible to everybody. It could just be accessible on that network, but it means the device is accessible externally. And anytime there’s an external interface, we want to try to figure out how that interface works, what data’s being transmitted and then how do we exploit that depending on what our attack is.

John Verry (34:22):

Right. And one thing we didn’t explicitly mention that probably makes sense to mention is that, and I like your internal versus external interfaces. I’ll steal that by the way. I steal all smart people’s ideas. I’ve never had an original idea in my life, Joe. When we talk about external interfaces, they can also be physical interfaces. It can be a USB, it can be RS 232. We see different physical interfaces that people can plug into. And it’s amazing how often we see an exposed interface and you plug something in and something happens.

Joe Grand (34:51):

That’s right. That’s exactly right. It’s anything right. And sometimes too, the external interface is not only used for user access. Say it’s a key pad on something or USB or micro SD card or whatever, but those interfaces can also be used for other functions that are hidden within it. There’s been times where a product might have say a VGA connection for something, but there’s unused pins on that connector that are used for access administrator access. Micro SD card slots are really common not only for Micro SD card storage-

John Verry (35:30):

That is the worst idea of putting firmware on an SD card.

Joe Grand (35:33):

And then they’re multiplexed and used also as a debug interface. It’s like when you see an interface on a board, an external interface on a product, you can’t nowadays just assume it’s performing a single function. Now you have internal interfaces, your physical, external interfaces that you connect to, and then you have your wireless, external interfaces or network, external interfaces that are connected to the network. And now you can see how for every single product, this snowballs into a million different ways to start accessing it.

John Verry (36:05):

Right. You used a term recently and I kind of agree with it. You almost kind of alluded to gaining access into some of these debug interfaces, almost root access. And I think maybe you would, or wouldn’t agree with me, that why that’s so concerning is that if I can get to that point, I can usually get to the firmware. The software that runs on the device that controls the way it operates. And if I can get that off of the device and I can reverse compile it, I can look through the code, I can see if they’re using digital certificates. I might be able to figure out where the digital search being kept. And then if they don’t have protection mechanisms in place to ensure I can insert code into the firmware, re-upload it and now it’s a device that is now doing things it was never intended to do.

Joe Grand (36:53):

That’s right. Yes, I agree with that. That’s exactly right. And really, I mean, one of the most common steps in the process when you’re hacking something physically is to get the firmware off. And there’s been times where people have contacted me and they’re like, “Hey, I’m hacking on this thing. Can you get the firmware off and I’ll do the rest.” Because hardware, nowadays really, it’s not that difficult, but people have their skills and they’ll outsource to people that might be better at one thing versus another. Usually, you take the product apart, you identify the components, you find where is the memory? Is there external memory? Is that storing crypto keys or certs or user information?

John Verry (37:34):

Passwords, IP addresses. I mean, stuff that shouldn’t be being kept there.

Joe Grand (37:38):

Right. And then you look for firmware, is that going to be external? Is that internal? You try to extract that and then it becomes a software problem. And then you start using Ghidra or IDA Pro and you reverse engineer that and figure out all of that. And that all comes back to the fact that these types of devices are computers running some code. And they’re just generally not as powerful as our laptops and phones, but they are still computers running code. And if we find those, then we can go that direction as well. That opens it up to people who, again, are not hardware people or not engineers per se. They’re skilled in software or they’re programmers that can now do this. And that opens it up even more.

John Verry (38:24):

I just want curiosity. When you’re chatting with people, if somebody asks you this question, would you have an answer to it? Is, are they better off using Linux derived firmware stack or using something else because we see a lot of Linux-based firmware. And I think that probably in some ways, if I’ve got maybe easier to implement, maybe there’s more knowledge around it, but it’s also more knowledge of how to attack it on the bad guy side.

Joe Grand (38:58):

Yeah. I would say, if you could avoid implementing Linux, avoid it. The problem is, it’s easy for me to say that because I’m not designing a system. A lot of times if I’m designing an access point or router or an IoT device, the vendor, say it’s the vendor that makes the camera control chip or a network chip or the system on a chip, whatever it is, they’re going to say, “Hey, here’s our reference design. This is how our engineers designed it. You’re going to want to use that and then customize it for your own product.” And that tends to be, it’s going to be a Linux operating system. It’s probably going to be insecure. A lot of devices kind of inherent problem that the original design engineers have given them. And Linux is something. If you combine Linux with debug access through SWD or JTAG where you can now manipulate memory on the fly as the system’s running, you can basically skip over security checks. You can patch things, you can do whatever you want. And because there are so many known vulnerabilities in Linux-

John Verry (40:00):

And libraries that people use on top of them.

Joe Grand (40:03):

Yeah. And the libraries. And a lot of people that are super familiar with Linux, it’s almost like you’re giving them more options. But it’s hard to avoid a lot of times as well. And there are some attempts at some realtime operating systems that are, not that Linux is a realtime operating system, but realtime operating systems that do have more security related elements and implementing proper code signing and compartmentalization and things. And that’s going to be more complex than a Linux operating system or say a micro version of Linux that runs on some of these smaller devices. But that I think is the way to find operating systems that are actually designed for embedded systems that are secure or try to be secure and go with those, if you can, if you can start from scratch and do that. But again, a lot of times you can’t, you’re kind of stuck with what is recommended or what the vendor is telling you.

John Verry (40:59):

And even your hardware choice is going to influence that. I mean, with certain types of processors you’re going to be forced into certain types of operating systems that will be used, right?

Joe Grand (41:08):

Yeah. Unless you’re designing everything from the ground level where you can choose, maybe you choose an FPGA, a field programmable gatorade, or you can create your own, essentially your own kind of custom hardware inside of this chip instead of using off the shelf chips. And then you can start devising encryption on the fly and then you implement that in a better operating system, if you need an operating system. There’s all these things you can do if you have control of that. Generally, the chips you use are going to be dependent on what you’re trying to do and then how those chips run is going to be dependent on how the vendor has anticipated how you’re going to run it. And you could try to break out of that and do something else, but engineers don’t like to recreate the wheel and we’re going to use what the vendor has given us whether it’s good or not. That’s what we use as the starting point.

Joe Grand (42:01):

And if you can try to lock things down from there, that’s a good step, but we don’t see that a lot. And then also we haven’t even talked about the upgrade model. The firmware updates and we’re IOT devices-

John Verry (42:15):

We do not have enough time to get into. I was going to, generically at the end say, look, there’s a whole side of this that’s the maintenance of this on an go forward basis. And that ties into one of the next two questions I was going to ask you. Let me ask you the first question first. I’m assuming the conversation that we just had is basically what, and a lot of the conversations at a much deeper level that you have when you teach your hardware hacking training to engineers and product management people.

Joe Grand (42:44):

Yes. Exactly. Basically my class is generally intended for technical people that haven’t really gotten involved in the hardware hacking side of things. And the goal really is to get people to think like hackers and to understand what an attacker is going to do in various methods to their product. And not all of the steps that we do in class are going to be relevant to every device because we talked about, every device is going to be different as far as what the threats are and the risks and what attackers are going to do. But it basically is giving people this overarching view of all the different stuff that could happen. Kind of like what we’ve talked about today, and then do some hands on exercises to validate that and also realize like, “Oh, wait, this isn’t that hard for somebody to do to sniff communication or to connect up to some administration port.”

Joe Grand (43:39):

And once they realize that they go, oh, wait I didn’t realize that somebody could do this that easily. And then they start thinking about it. And that’s really the goal, is just to educate more people to think about it more and hopefully that snowballs into better products and more secure products along the line.

John Verry (43:56):

Part of that better products and more secure products comes through guidance, standards, legislation. We’ve seen a lot. I mean, like the good news is, I would say, is that the few people who do recognize the risk are communicating the risk well and are trying to of provide the right guidance. In the past couple years, California SB 327, if you’re a device manufacturer, they set some standards, very low bar, by the way. But they’ve set a standard for devices that are sold in California. We talked about CSA, Cloud Security Alliance, they’ve got great guidance out there. ENISA has great guidance out there. OWASP ISVS is great guidance. ENISA has put out great guidance for these devices. There’s a lot of good guidance out there. In fact, I’d argue there’s too much guidance right now actually, because it gets confusing.

John Verry (44:44):

And that’s a common conversation I’m having with our clients. And then you’ve got the guidance that’s related to the other stuff that’s outside of the device. The OWASP guidance around the mobile application, around the actual APIs, the guidance around securing the cloud, because you’ve got cloud infrastructure. And now we most recently, we’ve got the presidential executive order, which has this concept of labeling of IoT devices for security. And you’re probably familiar with the Singapore. Finland came out with of the standard and now Singapore adopted either the Finland standard or a slightly modified version of the Finland standard. What are your thoughts on all the guidance that we’re seeing? How do you steer your clients around that?

Joe Grand (45:22):

I have mixed feelings about the guidance.

John Verry (45:25):

Because some of it’s probably not the guidance you would give.

Joe Grand (45:27):

And also it gives people a false sense of security. From a positive perspective, all of these different lists of recommendations are good because if somebody doesn’t understand security or even if they do, and they need to know what’s the most important things to protect. That’s good. Having lists is better than not knowing how to approach the problem, but it does lead to the false sense of security because implementing what is recommended is extremely hard. If you think about even something like FIPS 140-2 is a really common security standard for cryptographic devices. You have common criteria as well. And those are basically checklists of things. You need to have certain level of physical security, certain encryption of data at rest and blah, blah, blah, all of these things, which are great to strive for.

Joe Grand (46:23):

But there are a lot of devices that claim that have passed the FIPS 140 evaluation that are still vulnerable, because humans are still implementing the stuff. And if a human says, okay, I need to encrypt some data, but they don’t understand how to properly store the key or if they’re still using a general purpose micro controller, and they’re storing a key in some accessible area of memory or external memory, they’re still following the standard and doing things properly and they can check it off their list, but it’s not implement in a secure way to prevent an attacker. You could have encryption, but if the key is accessible, the encryption doesn’t matter. The problem is, like you said, there are lots of so many different guidances, people telling you what to do. Using those as a starting point of is good, but really verifying that you’ve implemented it right, that’s the hard part.

Joe Grand (47:18):

Getting the certification and checking off the boxes is not the hard part, but it’s actually making sure that you’ve done it the right way. And that’s the thing is I think we’re going to see more and more products that are like, “Buy us, we’re secure, we’ve passed this test,” but it doesn’t really, from a hacker perspective, when I see that I say, “Okay, they’ve followed some of the steps so maybe they’re doing certain things that I can now use as starting points to look for,” but I don’t necessarily say, okay, they’re secure. The vendor might say, “We’re secure. We passed the test.” But from its hack perspective, no, that is just a brand or [crosstalk 00:47:53].

John Verry (47:53):

But they’re more secure than somebody who didn’t tell you they passed the test or wasn’t even aware there was a test that they should pass.

Joe Grand (48:00):

Maybe.

John Verry (48:01):

Well, maybe.

Joe Grand (48:01):

Depends on how we implement it.

John Verry (48:03):

Exactly. I mean, listen, isn’t that always the case. The net out is, yes, if you do everything right. But doing everything right isn’t easy.

Joe Grand (48:14):

It’s hard. I mean, having a starting point and a checklist does not hurt.

John Verry (48:19):

At least if nothing else, it raises your floor. So as an example with the OWASP, I’m a big fan of the application security verification standards. If you’re right in the API that are backending your cloud, your IoT ecosystem. Knowing your developers know the 192 things that should be done right is far more likely than if they don’t know those 192 things. You can think a developer is not security conscious and raise their level, right?

Joe Grand (48:50):

It probably reduces the attack surface. And we’ll lock some things down. What we used to call it, the loft, which was a hacker group I was involved in back in the day, low hanging fruit. All of the things that are really easy to pick off. If you can get rid of those, either from a network level, application level, software level, firmware level, hardware level, you get rid of that low hanging fruit by following these recommendations, that’s probably going to be better than your competitor, for example, and it might maybe slightly harder to break, but I would just say if you’re using those certifications and sometimes they’re going to have to just don’t-

John Verry (49:29):

Don’t blindly rely on them.

Joe Grand (49:31):

Don’t blindly rely on it and don’t think that you are going to be more secure because you’ve done those things. Just be aware. Be aware that you could possibly still be compromised. Don’t use that as a marketing thing to try to get more sales. You still are going to need to have somebody validate your implementation and all of these things. Don’t just blindly trust what somebody is showing you.

John Verry (49:57):

I agree completely. We’ve concentrated on the engineering side and I think the question I would have for you. If I’m a company that wants to leverage IoT devices, if I’m a spinach manufacturer and there’s some really cool technology out there where you put these IoT devices sitting inside of the lettuce containers and we can track lettuce from field all the way to the store. We know what temperature was it in the trailer? How long was it in the trailer? All that stuff is possible to track. We can do the same thing right now with ground beef. I can go from the cattle horn, a sensor, RFID sensor on a cattle’s horn all the way down to a pound of chop meat sitting in a package at your grocer.

John Verry (50:39):

That can be tracked now. There’s valuable things that can be done with this technology. But if I’m a company, if I’m thinking about licensing or using a technology from one of these companies, you just painted a more negative picture than I hoped you’d paint in that, I do think we can do a lot to secure this. If I’m somebody who was looking to purchase this technology, what should I look for? If you were going to put something in your house, you said you don’t like putting stuff in your house. If you wanted to put something in your house, if there was enough return on investment or return on value for you, what would you look for to kind of feel good about this entity short of doing your own testing cause our clients can’t do that?

Joe Grand (51:21):

That’s an interesting point because one of my first things was going to say, to question the vendor, to basically have them show what have they done to properly secure their devices? What steps have they taken? But then at the same time, my second recommendation would be well, before you implement anything, you need to test it and validate it and understand on your own. But you just said that’s hard.

John Verry (51:46):

But I mean, most of our customer, they come to us to do the testing. They’re not in a position to do their own testings or they can’t afford to do the testing. You know what I mean? Now we’re going to, I’m going to put you into a corner here. I mean, so the problem we’re going to run into is that those standards that you said to not rely on for someone to say, “Hey, we’re third to these standards,” are now going to be the things that we’re going to have to tell our customers to look for, because at least if they’re there, it’s better than nothing, right? I mean, it’s not definitive, but the guy that you say, hey, have you done any testing of your stuff and they say no, versus we had a third party test and we’ve been verified to this standard, you’re probably better off in the latter camp than you’re on the former camp.

Joe Grand (52:31):

Yeah. That gets into a whole rat hole of things. And then it’s like, “Well, who’s evaluating the device?”

John Verry (52:38):

And who’s evaluating the evaluator?

Joe Grand (52:39):

Who’s evaluating the evaluator. And there’s been plenty of times of things that have passed tests that shouldn’t have passed tests. Or something passes the test and then in production manufacturing-

John Verry (52:50):

They change it.

Joe Grand (52:50):

… elements change. I think it’s going to be something where devices they’re going to use these certifications to say, “This is what we’ve done.” But I think you really want to put the … I mean, it’s hard. You want to put the onus on the product vendor to prove that they have anticipated the physical access or remote access or all of these things that we’ve talked about. Even at a high level though, I think the purchaser or the implementer can question the vendors and not only ask to see their results but ask them if they’re worried about remote access, because that’s what is being used to track all the lettuce in the field or whatever it is. They’re going to need legit remote access.

Joe Grand (53:30):

So how is that being made secure versus an attacker getting remote access, but none of it’s easy. It’s just, if you’re aware of these things, like we’ve talked about, you can at least start that conversation. I would be worried about implementing anything like that without trying to do either some of my own testing or like you said, farm it out, but it’s like when you buy something on Amazon, how many times do you actually evaluate the product before you use it? I’ve never done that. And I sometimes will open it up and look at it, but usually not, you buy it, you plug it in, you deploy it as you would and hope it all works. I hope that the conversation has started enough where people who have to implement these products and maybe don’t have the resources to do all the full testing themselves, at least understand some of the threats that can happen and then push that onto the vendor and say, okay, how are you protecting me against these things?

John Verry (54:27):

Right. And look, like you said, there is no definitive, there is no such thing as 100% secure no matter what type of security we’re talking about. And you do your best job of understanding, like you said, the risk. Understanding what compensating controls you might want to put in place for the areas that you didn’t get a good warm fuzzy on. And then just be aware that, like you said, your responsibility to monitor and ensure. And like you said, I mean, the bigger challenge is that yes, they just had all this stuff tested, but the minute that new firmware comes out, because it has to come out, has that been tested as well? So even if you have this process by which you’ve validated the integrity of the system, initially, you do have to also have a process to go back and make sure that that’s being revalidated by either you or by your customer or by your vendor.

Joe Grand (55:13):

That’s why a lot of people don’t up upgrade devices that are deployed in the field. Even computer systems and servers, because that could break something that has not been tested. But just talking about products going back a second, a lot of products are also very implementation specific. So what might appear to be secure or suitably secure for one environment might not be the same for another. And so one example that I thought of too talking about this and kind of asking the vendor if they’re secure and sort of trying to do that before deployment is going back to the parking meter example, the City Of San Francisco purchased these parking meters from a vendor who made the parking meter and the city, they were the implementer and they were totally relying on what the vendor told them about the parking meters.

Joe Grand (56:02):

Yes, they’re designed to be against vandalism, somebody putting gum in the thing and all of these different physical destruction types of things, but they didn’t promise anything about security of the actual smart card implementation. And the city is who ended up getting the brunt of the problem because people were not paying for parking by creating fake smart cards. And I only assume this because if I can do this and go public with it, it probably means other people were doing it, who didn’t go public with it. And the implementation was really easy to hack, but if the city had maybe said, okay, how is this secure against people creating fake smart cards to do this, then that would put the vendor … Make them responsible to explain that. But the city inherited the problems of these parking meters and got stuck with that. And then the vendor went back later to try to fix it. But that really shows you that if you’re not able to ask the vendor questions, the vendor might not actually understand your implementation enough to protect against those risks.

John Verry (57:13):

Yeah. This is a perfect wrap on the episode. Because really what you’ve come back to is, this is all fundamental risk management. It’s risk management on their side, and then it’s risk management on your side and understanding the context, understanding your particular use of said product, your technology stack, your capabilities to monitor, maintain the environment, all that fun stuff, and then understand where the critical risks and how do I effectively manage them.

Joe Grand (57:37):

That’s right. That’s exactly right. And it’s all very difficult.

John Verry (57:42):

Yeah. I think that’s a great way … You know what, what we should do is you should say that one more last time and then I should hit stop recording and just leave people with that ominous note.

Joe Grand (57:52):

That’s right.

John Verry (57:53):

Listen, dude, this has been great. You brought it today. Thank you.

Joe Grand (57:58):

Cool. Well, I appreciate it. Thanks.

John Verry (58:00):

Listen, how would somebody, if they wanted to work with … You run a company called Grand Idea Studio, if I got that right. Obviously you know your crap. If somebody wanted to reach out to you, how would they go about doing that?

Joe Grand (58:13):

So you can go to my website, which is grandideastudio.com. I am currently updating it. So don’t be afraid of the old looking website, but there’s a contact form there so they can fill that out. You can also follow me on Twitter. I’m @JoeGrand, J-O-E-G-R-A-N-D. And that’s my only social media presence, is Twitter. I’ll update projects I’m working on, but the website really is where I put stuff and happy to answer questions if people have them and so feel free to contact me and thanks for everybody for listening.

John Verry (58:47):

This has been a lot of fun, man. Thank you.

Joe Grand (58:48):

Cool. All right. Thanks again.

Narrator (Intro/Outro) (58:49):

You’ve been listening to the Virtual CISO Podcast, as you probably figured out, we really enjoy information security. If there’s a question we haven’t yet answered, or you need some help, you can reach us at [email protected]. And to ensure you never miss an episode, subscribe to the show in your favorite podcast player. Until next time, let’s be careful out there.